EasyEnsembleClassifier#

- class imblearn.ensemble.EasyEnsembleClassifier(n_estimators=10, estimator=None, *, warm_start=False, sampling_strategy='auto', replacement=False, n_jobs=None, random_state=None, verbose=0)[source]#

Bag of balanced boosted learners also known as EasyEnsemble.

This algorithm is known as EasyEnsemble [1]. The classifier is an ensemble of AdaBoost learners trained on different balanced bootstrap samples. The balancing is achieved by random under-sampling.

Read more in the User Guide.

Added in version 0.4.

- Parameters:

- n_estimatorsint, default=10

Number of AdaBoost learners in the ensemble.

- estimatorestimator object, default=AdaBoostClassifier()

The base AdaBoost classifier used in the inner ensemble. Note that you can set the number of inner learner by passing your own instance.

Added in version 0.10.

- warm_startbool, default=False

When set to True, reuse the solution of the previous call to fit and add more estimators to the ensemble, otherwise, just fit a whole new ensemble.

- sampling_strategyfloat, str, dict, callable, default=’auto’

Sampling information to sample the data set.

When

float, it corresponds to the desired ratio of the number of samples in the minority class over the number of samples in the majority class after resampling. Therefore, the ratio is expressed as \(\alpha_{us} = N_{m} / N_{rM}\) where \(N_{m}\) is the number of samples in the minority class and \(N_{rM}\) is the number of samples in the majority class after resampling.Warning

floatis only available for binary classification. An error is raised for multi-class classification.When

str, specify the class targeted by the resampling. The number of samples in the different classes will be equalized. Possible choices are:'majority': resample only the majority class;'not minority': resample all classes but the minority class;'not majority': resample all classes but the majority class;'all': resample all classes;'auto': equivalent to'not minority'.When

dict, the keys correspond to the targeted classes. The values correspond to the desired number of samples for each targeted class.When callable, function taking

yand returns adict. The keys correspond to the targeted classes. The values correspond to the desired number of samples for each class.

- replacementbool, default=False

Whether or not to sample randomly with replacement or not.

- n_jobsint, default=None

Number of CPU cores used during the cross-validation loop.

Nonemeans 1 unless in ajoblib.parallel_backendcontext.-1means using all processors. See Glossary for more details.- random_stateint, RandomState instance, default=None

Control the randomization of the algorithm.

If int,

random_stateis the seed used by the random number generator;If

RandomStateinstance, random_state is the random number generator;If

None, the random number generator is theRandomStateinstance used bynp.random.

- verboseint, default=0

Controls the verbosity of the building process.

- Attributes:

- estimator_estimator

The base estimator from which the ensemble is grown.

Added in version 0.10.

- estimators_list of estimators

The collection of fitted base estimators.

estimators_samples_list of arraysThe subset of drawn samples for each base estimator.

- estimators_features_list of arrays

The subset of drawn features for each base estimator.

- classes_array, shape (n_classes,)

The classes labels.

- n_classes_int or list

The number of classes.

- n_features_in_int

Number of features in the input dataset.

Added in version 0.9.

- feature_names_in_ndarray of shape (

n_features_in_,) Names of features seen during

fit. Defined only whenXhas feature names that are all strings.Added in version 0.9.

See also

BalancedBaggingClassifierBagging classifier for which each base estimator is trained on a balanced bootstrap.

BalancedRandomForestClassifierRandom forest applying random-under sampling to balance the different bootstraps.

RUSBoostClassifierAdaBoost classifier were each bootstrap is balanced using random-under sampling at each round of boosting.

Notes

The method is described in [1].

Supports multi-class resampling by sampling each class independently.

References

Examples

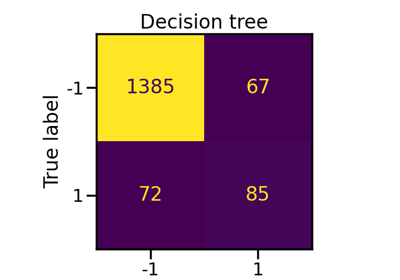

>>> from collections import Counter >>> from sklearn.datasets import make_classification >>> from sklearn.model_selection import train_test_split >>> from sklearn.metrics import confusion_matrix >>> from imblearn.ensemble import EasyEnsembleClassifier >>> X, y = make_classification(n_classes=2, class_sep=2, ... weights=[0.1, 0.9], n_informative=3, n_redundant=1, flip_y=0, ... n_features=20, n_clusters_per_class=1, n_samples=1000, random_state=10) >>> print('Original dataset shape %s' % Counter(y)) Original dataset shape Counter({1: 900, 0: 100}) >>> X_train, X_test, y_train, y_test = train_test_split(X, y, ... random_state=0) >>> eec = EasyEnsembleClassifier(random_state=42) >>> eec.fit(X_train, y_train) EasyEnsembleClassifier(...) >>> y_pred = eec.predict(X_test) >>> print(confusion_matrix(y_test, y_pred)) [[ 23 0] [ 2 225]]

Methods

decision_function(X, **params)Average of the decision functions of the base classifiers.

fit(X, y)Build a Bagging ensemble of estimators from the training set (X, y).

Get metadata routing of this object.

get_params([deep])Get parameters for this estimator.

predict(X, **params)Predict class for X.

predict_log_proba(X, **params)Predict class log-probabilities for X.

predict_proba(X, **params)Predict class probabilities for X.

score(X, y[, sample_weight])Return accuracy on provided data and labels.

No-op.

set_params(**params)Set the parameters of this estimator.

set_score_request(*[, sample_weight])Configure whether metadata should be requested to be passed to the

scoremethod.- property base_estimator_#

Attribute for older sklearn version compatibility.

- decision_function(X, **params)[source]#

Average of the decision functions of the base classifiers.

- Parameters:

- X{array-like, sparse matrix} of shape (n_samples, n_features)

The training input samples. Sparse matrices are accepted only if they are supported by the base estimator.

- **paramsdict

Parameters routed to the

decision_functionmethod of the sub-estimators via the metadata routing API.Added in version 1.7: Only available if

sklearn.set_config(enable_metadata_routing=True)is set. See Metadata Routing User Guide for more details.

- Returns:

- scorendarray of shape (n_samples, k)

The decision function of the input samples. The columns correspond to the classes in sorted order, as they appear in the attribute

classes_. Regression and binary classification are special cases withk == 1, otherwisek==n_classes.

- property estimators_samples_#

The subset of drawn samples for each base estimator.

Returns a dynamically generated list of indices identifying the samples used for fitting each member of the ensemble, i.e., the in-bag samples.

Note: the list is re-created at each call to the property in order to reduce the object memory footprint by not storing the sampling data. Thus fetching the property may be slower than expected.

- fit(X, y)[source]#

Build a Bagging ensemble of estimators from the training set (X, y).

- Parameters:

- X{array-like, sparse matrix} of shape (n_samples, n_features)

The training input samples. Sparse matrices are accepted only if they are supported by the base estimator.

- yarray-like of shape (n_samples,)

The target values (class labels in classification, real numbers in regression).

- Returns:

- selfobject

Fitted estimator.

- get_metadata_routing()[source]#

Get metadata routing of this object.

Please check User Guide on how the routing mechanism works.

Added in version 1.5.

- Returns:

- routingMetadataRouter

A

MetadataRouterencapsulating routing information.

- get_params(deep=True)[source]#

Get parameters for this estimator.

- Parameters:

- deepbool, default=True

If True, will return the parameters for this estimator and contained subobjects that are estimators.

- Returns:

- paramsdict

Parameter names mapped to their values.

- predict(X, **params)[source]#

Predict class for X.

The predicted class of an input sample is computed as the class with the highest mean predicted probability. If base estimators do not implement a

predict_probamethod, then it resorts to voting.- Parameters:

- X{array-like, sparse matrix} of shape (n_samples, n_features)

The training input samples. Sparse matrices are accepted only if they are supported by the base estimator.

- **paramsdict

Parameters routed to the

predict_proba(if available) or thepredictmethod (otherwise) of the sub-estimators via the metadata routing API.Added in version 1.7: Only available if

sklearn.set_config(enable_metadata_routing=True)is set. See Metadata Routing User Guide for more details.

- Returns:

- yndarray of shape (n_samples,)

The predicted classes.

- predict_log_proba(X, **params)[source]#

Predict class log-probabilities for X.

The predicted class log-probabilities of an input sample is computed as the log of the mean predicted class probabilities of the base estimators in the ensemble.

- Parameters:

- X{array-like, sparse matrix} of shape (n_samples, n_features)

The training input samples. Sparse matrices are accepted only if they are supported by the base estimator.

- **paramsdict

Parameters routed to the

predict_log_proba, thepredict_probaor theprobamethod of the sub-estimators via the metadata routing API. The routing is tried in the mentioned order depending on whether this method is available on the sub-estimator.Added in version 1.7: Only available if

sklearn.set_config(enable_metadata_routing=True)is set. See Metadata Routing User Guide for more details.

- Returns:

- pndarray of shape (n_samples, n_classes)

The class log-probabilities of the input samples. The order of the classes corresponds to that in the attribute classes_.

- predict_proba(X, **params)[source]#

Predict class probabilities for X.

The predicted class probabilities of an input sample is computed as the mean predicted class probabilities of the base estimators in the ensemble. If base estimators do not implement a

predict_probamethod, then it resorts to voting and the predicted class probabilities of an input sample represents the proportion of estimators predicting each class.- Parameters:

- X{array-like, sparse matrix} of shape (n_samples, n_features)

The training input samples. Sparse matrices are accepted only if they are supported by the base estimator.

- **paramsdict

Parameters routed to the

predict_proba(if available) or thepredictmethod (otherwise) of the sub-estimators via the metadata routing API.Added in version 1.7: Only available if

sklearn.set_config(enable_metadata_routing=True)is set. See Metadata Routing User Guide for more details.

- Returns:

- pndarray of shape (n_samples, n_classes)

The class probabilities of the input samples. The order of the classes corresponds to that in the attribute classes_.

- score(X, y, sample_weight=None)[source]#

Return accuracy on provided data and labels.

In multi-label classification, this is the subset accuracy which is a harsh metric since you require for each sample that each label set be correctly predicted.

- Parameters:

- Xarray-like of shape (n_samples, n_features)

Test samples.

- yarray-like of shape (n_samples,) or (n_samples, n_outputs)

True labels for

X.- sample_weightarray-like of shape (n_samples,), default=None

Sample weights.

- Returns:

- scorefloat

Mean accuracy of

self.predict(X)w.r.t.y.

- set_fit_request() EasyEnsembleClassifier[source]#

No-op.

Calling this method has no effect.

- Returns:

- selfobject

The updated object.

- set_params(**params)[source]#

Set the parameters of this estimator.

The method works on simple estimators as well as on nested objects (such as

Pipeline). The latter have parameters of the form<component>__<parameter>so that it’s possible to update each component of a nested object.- Parameters:

- **paramsdict

Estimator parameters.

- Returns:

- selfestimator instance

Estimator instance.

- set_score_request(*, sample_weight: bool | None | str = '$UNCHANGED$') EasyEnsembleClassifier[source]#

Configure whether metadata should be requested to be passed to the

scoremethod.Note that this method is only relevant when this estimator is used as a sub-estimator within a meta-estimator and metadata routing is enabled with

enable_metadata_routing=True(seesklearn.set_config). Please check the User Guide on how the routing mechanism works.The options for each parameter are:

True: metadata is requested, and passed toscoreif provided. The request is ignored if metadata is not provided.False: metadata is not requested and the meta-estimator will not pass it toscore.None: metadata is not requested, and the meta-estimator will raise an error if the user provides it.str: metadata should be passed to the meta-estimator with this given alias instead of the original name.

The default (

sklearn.utils.metadata_routing.UNCHANGED) retains the existing request. This allows you to change the request for some parameters and not others.Added in version 1.3.

- Parameters:

- sample_weightstr, True, False, or None, default=sklearn.utils.metadata_routing.UNCHANGED

Metadata routing for

sample_weightparameter inscore.

- Returns:

- selfobject

The updated object.